This is an overview of the O2 computing system. It is pretty rough, but it should give you an idea of the general architecture. For more details, please refer to other documents such as the TDR.

Requirements

-

After LS2 the ALICE experiment will have gone through a massive upgrade to handle minimum bias PbPb interactions at 50 kHz. This will results in an event rate 100 times higher than during Run 1 (2010-2012).

-

Moreover, the physics topics addressed by the ALICE upgrade are characterised by a very small signal-to-noise ratio, requiring very large statistics, and a large background, which makes triggering techniques very inefficient, if not impossible.

-

Finally, the inherent rate of the Time Projection Chamber (TPC) detector is lower than 50kHz, thus requiring a continuous, non-triggered, read-out.

A new computing system: ALICE O2

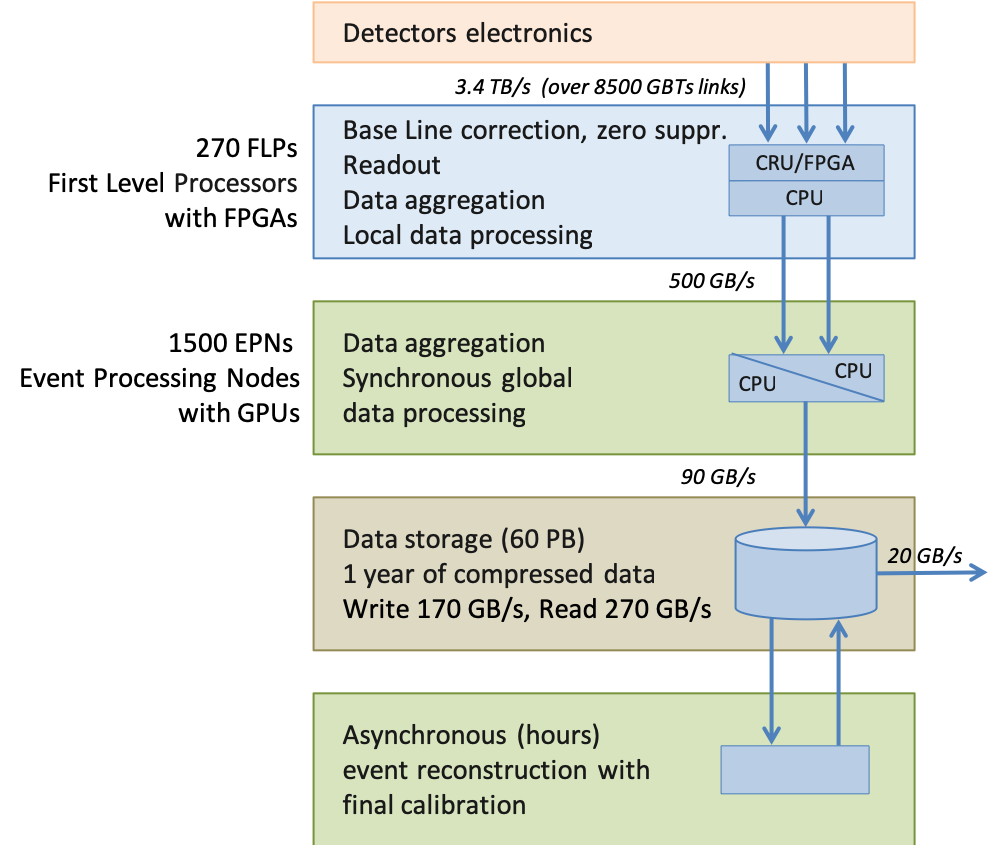

After the upgrade the readout throughput will increase to 3.4TB/s. To store such a data volume is quite challenging.

This led us to design a new computing system for Run 3 and 4. In this new system the data is compressed on-the-fly by partial online reconstruction, achieving a compression factor of 30. In this unified design, the traditional boundary between online and offline tasks is much thinner. As a consequence, we have a common computing system for online and offline named “O2”.

Data flow

The O2 data flow is depicted below in a simplified way. A more detailed description is available in the TDR.